- Cloudberry backup losts folder issues update#

- Cloudberry backup losts folder issues upgrade#

- Cloudberry backup losts folder issues license#

If you can afford Veeam and to store 11TB on the cloud I am sure you can show some respect to CloudBerry. This maybe because you have purchased it before August 2014. We are still running the legacy version on one of our servers, showing over 11TB of data transferred in just one of our backups.

How (sic) told you the legacy version didn't have the limit? It comes with the same limit as every other edition.

Cloudberry backup losts folder issues license#

We have a legacy version running (Cloud Backup for Windows Server (Legacy) - $79.99) that doesn’t have this limitation, but isn’t available to buy.Ĭould we swap the license we have, for that one? We don’t need the extra features like MS Exchange backup, we just need to get around the 1TB backup limit.

Cloudberry backup losts folder issues upgrade#

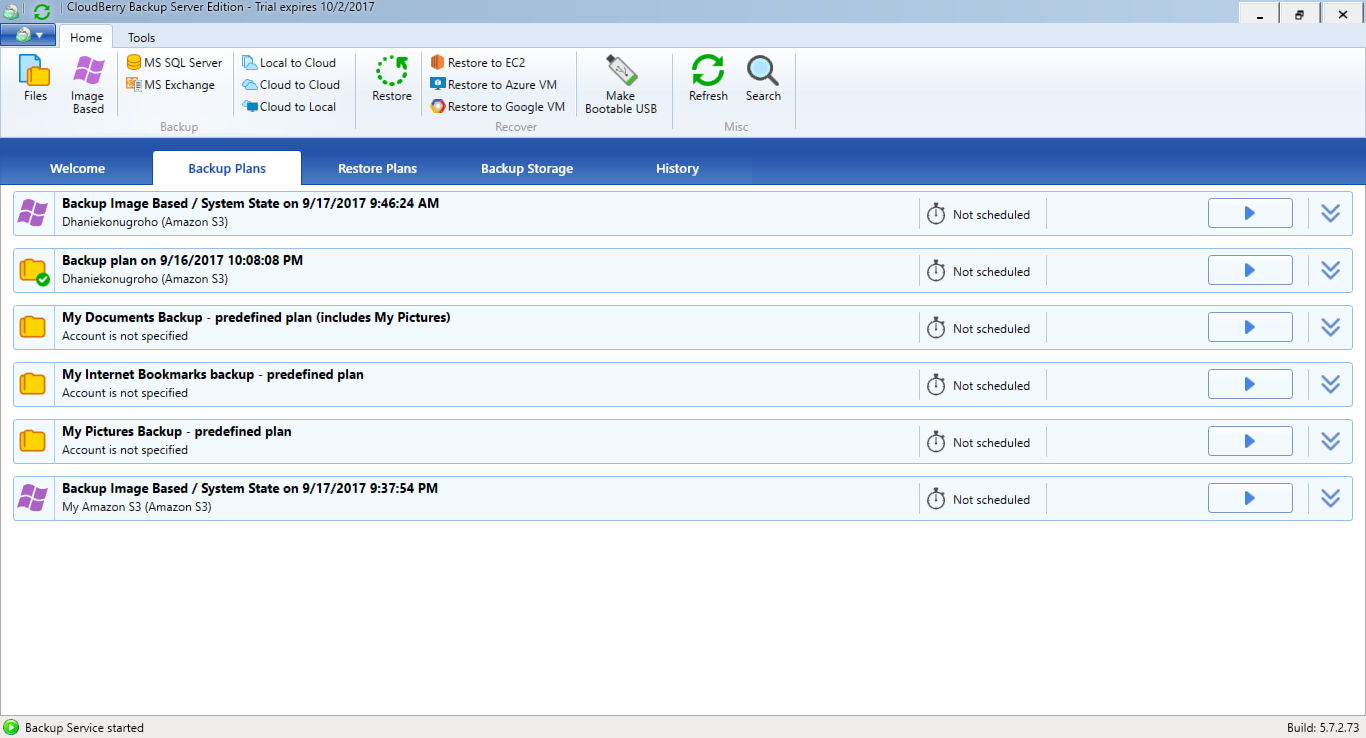

The upgrade price seems a bit steep (£180). Make sure to release the license in the Help menu before >applying for the upgrade coupon. We have an upgrade self service that will allow you to get an upgrade link You can upgrade by paying the difference. You need to use Ultimate edition.”ĭo you have an upgrade path? As I don’t see it mentioned on your website. We have purchased the “Cloudberry Backup Server Edition”, but want to upgrade to the “Ultimate (former Enterprise) Edition” as we are reaching the error: “Product total size limit (1.00 TB) is exceeded. This copy request is illegal because it is trying to copy an object to itself without changing the object's metadata, storage class, website redirect location or encryption attributes.I just had this nice little exchange from one of their support staff:

Cloudberry backup losts folder issues update#

However, to trigger this, you also have to update something else otherwise you get this error: The aws s3 cp command in the AWS Command-Line Interface (CLI) can update permissions on a file when copied to the same name. I managed to reproduce this and can confirm that users in Account B cannot access the file - not even the root user in Account B!įortunately, things can be fixed.

I made a new folder in my bucket called "media". With some assistance and suggestions from the folks here I have tried the following. I'd like to avoid downloading and reuploading all of the files.

Is there a way i can gain control of these files so that I can make them public? There are over 15,000 files / around 60GBs of files. No users, groups, or system permissions have been assigned.Īt the bottom of the Permissions tab, I see a small box that says "Error: Access Denied". When I look at this specificfile, the permissions tab on the files does not show any permissions assigned to anyone. Specifically, I login to my AWS account, go into S3, drill down through the folder structures to locate one of the videos files. I'm running into a problem now with all of the files where i am receiving Access Denied errors when I try to make all of the files public. I have a set of video files that were copied from one AWS Bucket from another account to my account in my own bucket.